Display of the same content on more than one website (URL) known as duplicate content.

According to Google: Duplicate content generally refers to substantive blocks of content within or across domains that either completely matches other content or are appreciably similar. Mostly, this is not deceptive in origin.

Note: It’s common duplicate content e-commerce websites as they can not write different product quality

Here get the easy explanation of “content within or across domains,” and “content is appreciably similar”

Content within or across domains:

Condition 1:

If the same content particularly belongs to the single domain space, with different-different URLs will get treated as duplicate content.

Condition 2:

If the same content appears on multiple domain spaces considered duplicate content.

matt cutts duplicate content video for more clarification

Content is appreciably similar:

Here, similar content means, use of synonyms, copy and spin of exiting content, and use of scraps.

Some time duplicate content can be technically like:

your post URL is available on both “WWW.” and Non “WWW.” and HTTP & Https URL.

Impact of duplicate content on your site:

Google does not have a penalty policy. Although it creates a negative impact on website health.

Instead of taking action google, downgrade the ranking of duplicate content

Before going to more deep let’s take a view of Google Q&A #4 Duplicate Content:

The above video states that:

According to Google’s Andrey Lipattsev, “Google does not have a duplicate content penalty.

Google Clarification:

There is no duplicate content penalty:

Yes, it’s true Google doesn’t penalize for a copy. However, your content doesn’t get ranking over unique content. But Google doesn’t treat it as a penalty. Because, as we know, ranking depends upon uniqueness and quality.

So Google keeps in mind uniqueness. It filters out the most suitable and unique content to place on the top of the SERP.

But how to write unique content on an e-commerce website as we know duplicate content e-commerce websites is natural

To avoid duplicate content on e-commerce websites you will have to do some changes but the product description will be the same.

duplicate content product pages are natural so be careful while writing product descriptions on an e-commerce website

Like: router outlet duplicate content and Shopify duplicate content

drupal duplicate content these are some examples of duplicate content eCommerce

On some other sites like quora and medium you can see duplicate content there

medium duplicate content is common because they allow all the content on that platform

The same is with quora here I want to clarify it’s common a duplicate of content on a storage medium

Google reward uniqueness

Google shows a clear sign. Unique content will get the reward in the ranking.

Filter out duplicate content:

Yes, it’s obvious Google filters out copy content and down their rank. However, when it finds a relevant place on SERP.

Duplicate content slows down the search result:

The search engine will have to take more effort to find out the results. There is a direct relationship between quantity and unique results.

The XML sitemap is a technical method to discover new content:

The sitemap helps not only in indexing the content it helps the search engines to discover new content.

You can check duplicate content with an external duplicate content checker

As we know duplicate content roots detected Gradle

Let’s take the question of a webmaster hangout to make it more relevant.

How Google categorizes the content type:

Copy content

Thin content

Syndicated content

Scraped content

Http. And Https Url content

WWW and non-WWW Url content

Dynamically generated URL content

Copy content:

Reuse of content with some change like the use of synonyms, spin, and rephrase

EXAMPLE:

Google doesn’t penalize for a copy. However, your content doesn’t get ranking over unique content.

Copy

Google doesn’t fine for a repeat. But your content will not get ranking over unique content.

Thin content:

Content without an added value.

If you write content that doesn’t add value to the topic, Google considers them thin content.

Thin content is those content, especially which don’t add value to the content.

Syndicated content

Re-use of the content with the permission of the author.

Although syndicated content is not an SEO issue.

However, it affects the ranking

Example:

Let’s suppose

You copied the sentence and took permission from the author to reuse the content.

You are using content in your blog simultaneously; the author is also using the same content with the real canonical tag.

As a result, your content will get undervalued in uniqueness.

Scraped content

Use of content without the consent of the author.

Here you can take action against the scraper by reporting to google.

HTTP and HTTPS Url content:

It starts with the change of site from HTTP to HTTPS.

Actually, it’s a technical error and found as a common problem.

It’s good using https instead of http. However, it can be fixed by 301 redirects.

WWW and Non-WWW Url content:

This technical duplicity is similar to Http and Https and can be resolved by 301 redirects.

Dynamically generated URL content:

Dynamically generated URLs can differ slightly from the original URL to give user preference.

Example

https://www.example.com/seo.html?newuser=rue

https://www.example.com/seo.html?order=desc

and they can be even more.

How to resolve the duplicate content issue:

You can identify duplicate content using tools like site-liner.

Once you identified the duplicate content, you can resolve it with the following method.

Real canonical tag

301 Redirect.

Linking back to original content

Real canonical tag:

Insert the real canonical tag for the identification of real content.

It helps Googlebot to identify the real content and reduce the crawl budget.

Example

<head>

<link href=”URL of original page” rel=”canonical” />

Use of canonical tag should always be in a head part of html

301 Redirect:

Redirect is the process of forwarding one URL to a different URL.

It is the best method to link content with the real one.

Information aired by Google on duplicate content:

search quality evaluator guidelines aired on September 5, 2019

DYK Google doesn’t have a duplicate content penalty, but having many URLs serving the same content burns crawl budget and may dilute signals by – Gary “鯨理” Illyes on 12 FEB 2017

English Google Webmaster Central office-hours hangout 24 OCT 2014

Google Advice: Duplicate Content on Product & Category Pages

English Google Webmaster Central office-hours hangout Nov 6, 2015

English Google Webmaster Central office hours from September 6, 2019

English Google Webmaster Central office-hours hangout OCT 7 2016

Is the same content posted under different TLDs a problem? 25 May 2011

If I report the same news story as someone else, is that duplicate content? 16 May 2012

English Google Webmaster Central office-hours hangout 9 JAN 2018

More reading:

https://webmasters.googleblog.com/2009/10/reunifying-duplicate-content-on-your.html

https://webmasters.googleblog.com/2011/05/more-guidance-on-building-high-quality.html

https://searchengineland.com/google-panda-demotes-adjusts-rankings-not-devalue-261142

Conclusion

Duplicate content is a common problem. You can find it on many websites. However, always try to remove duplicate content from your website to increase your ranking and secure from the Google penalty.

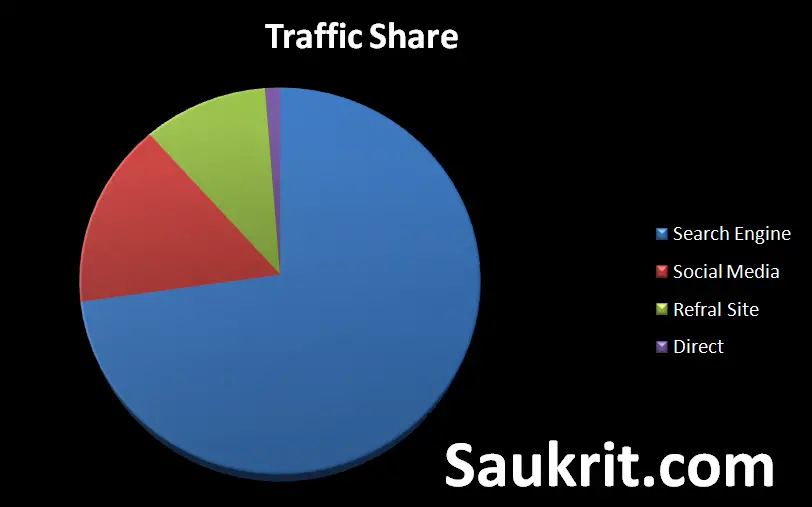

Top searches: Profile creation site list, Article submission site list, Social bookmarking site list, Forum submission site list, guest posting site list, Unique visitors, meta tag, Search engine submission, Do-follow backlink, Google webmaster tool, SEO, Duplicate content, organic traffic.